Humanly Case Study: How AI Detection Rebuilt Trust in a Changing Insurance Landscape

The Scope: A New Fraud Challenge Emerges

The insurance industry has always lived at the intersection of trust, risk and verification. As digital transformation accelerated, something unexpected happened. The very tools that helped insurers modernise their customer experience also created the perfect environment for new kinds of fraud.

In recent years claims handlers began facing a growing number of images that did not look quite right. Accident photos that contained unnatural shadows, property damage that seemed a little too symmetrical, medical imagery that did not match any known diagnostic pattern. At first these cases seemed unusual, then they became common.

This was not traditional fraud. It was the arrival of synthetic media.

Images created or altered by artificial intelligence.

Doctored accident reports.

AI generated property damage.

Manipulated invoices and repair evidence.

Synthetic medical imagery that appeared convincing at first glance.

Conventional fraud systems were never designed to deal with this. They relied on human judgement and static rule sets, and these methods simply could not keep pace. The result was clear. Claims leakage increased, manual verification became overwhelming, and customer experience suffered as teams struggled to determine what was real and what was not.

Insurance leaders knew they needed something new. A way to protect their teams, their customers, and their budgets from a type of fraud that evolved faster than the tools designed to stop it.

This is where Humanly began.

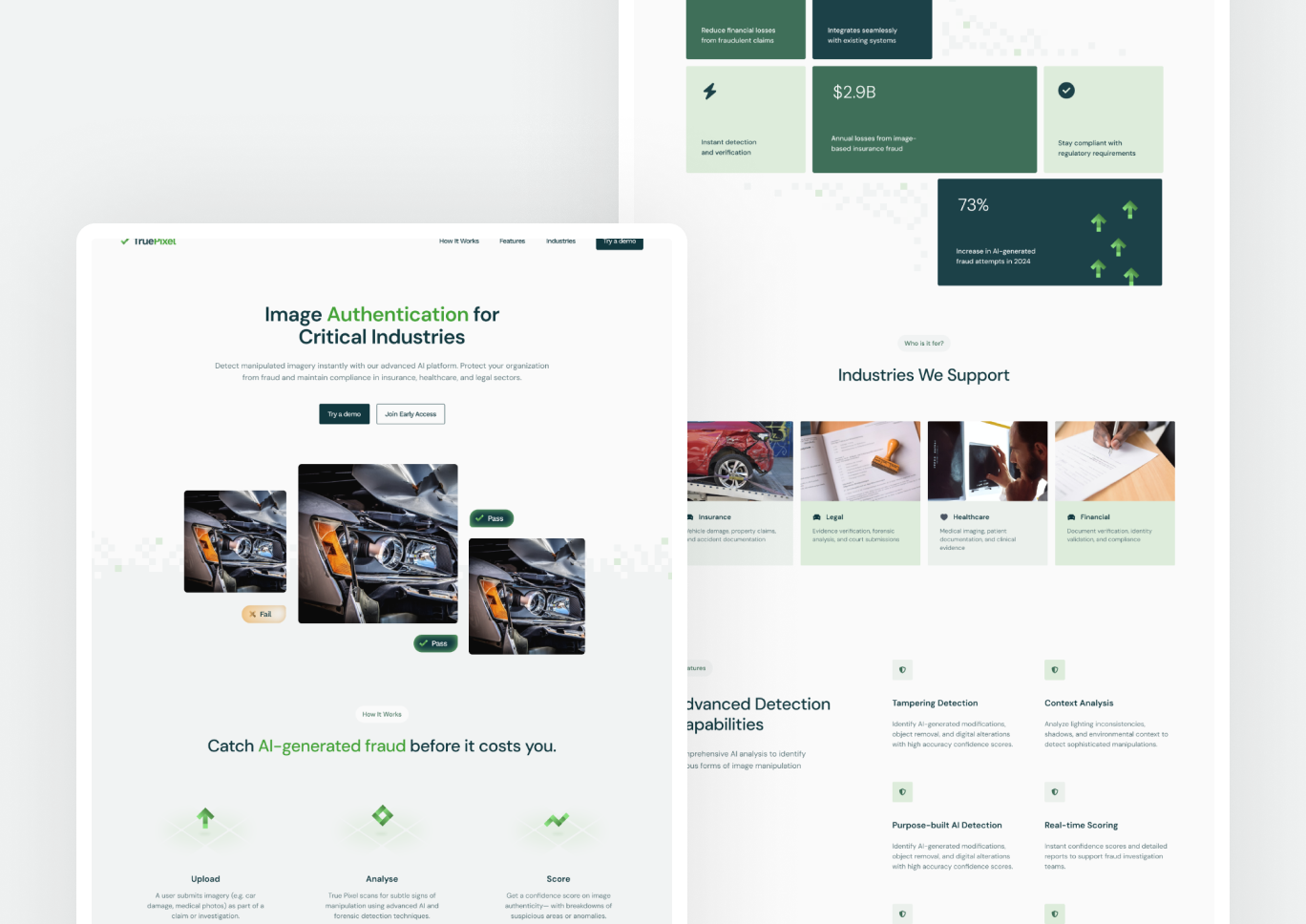

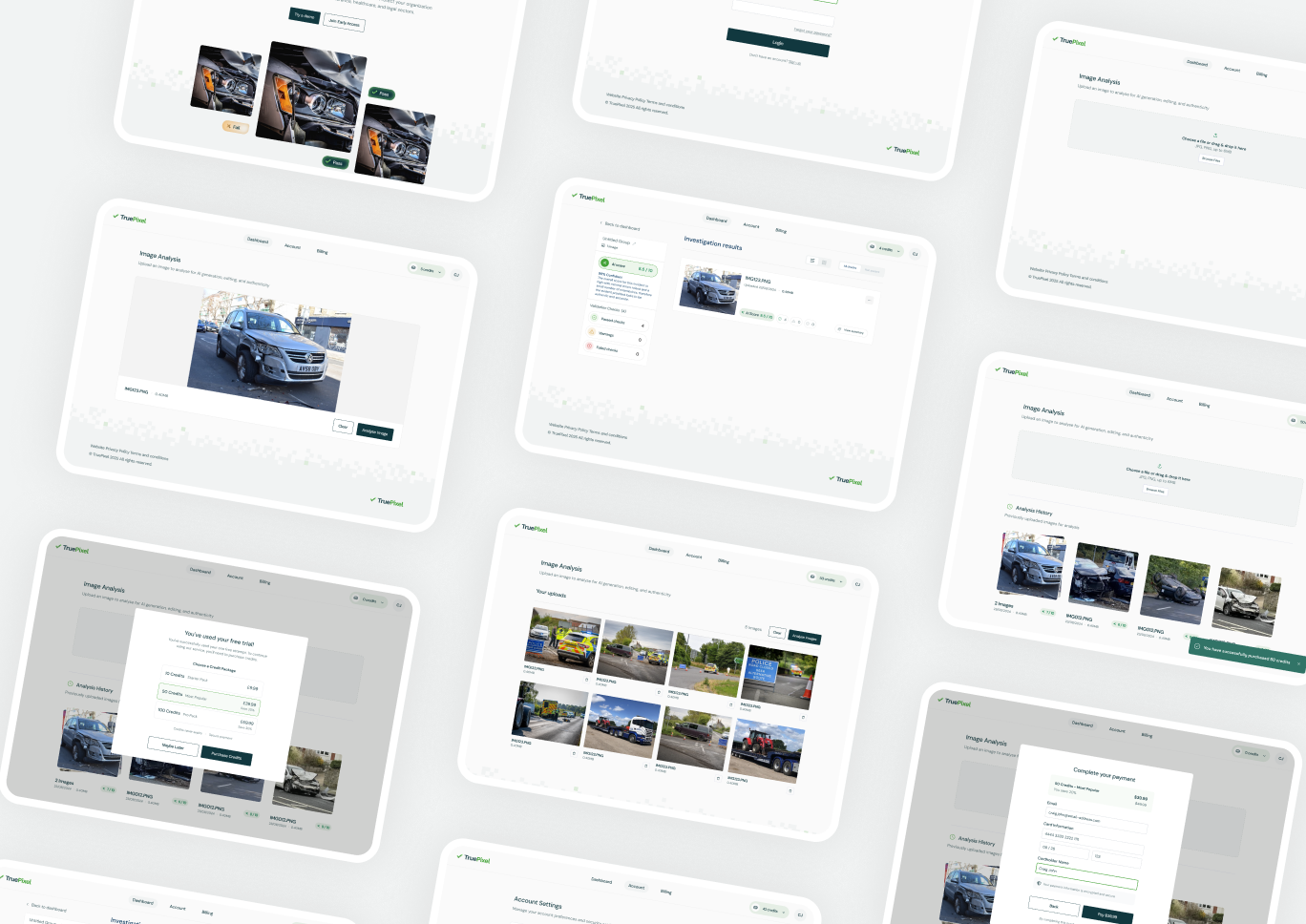

The Solution: Humanly, an AI Detection Tool Built for Digital Evidence

AI XON, an AI development company specialising in advanced integrity analysis, partnered with industry experts to create Humanly. The mission was straightforward. Build an AI detection tool capable of revealing whether a submitted image was genuine, altered or fully created by artificial intelligence.

But achieving that required far more than a simple filter. Fraudsters were becoming increasingly sophisticated, and off the shelf deepfake detection tools were limited to narrow use cases. Humanly needed to operate across every insurance line, detect multiple forms of manipulation, and integrate seamlessly into existing claims systems.

Humanly uses a blended model of digital forensics and machine intelligence.

It examines the image in layers.

It analyses noise patterns that reveal whether the image has been artificially smoothed or sharpened.

It evaluates light consistency, shadow geometry and pixel level irregularities.

It inspects metadata to determine whether timestamps, locations or device information have been altered.

It runs advanced AI models trained on thousands of synthetic images to identify subtle signatures that a human eye would never catch.

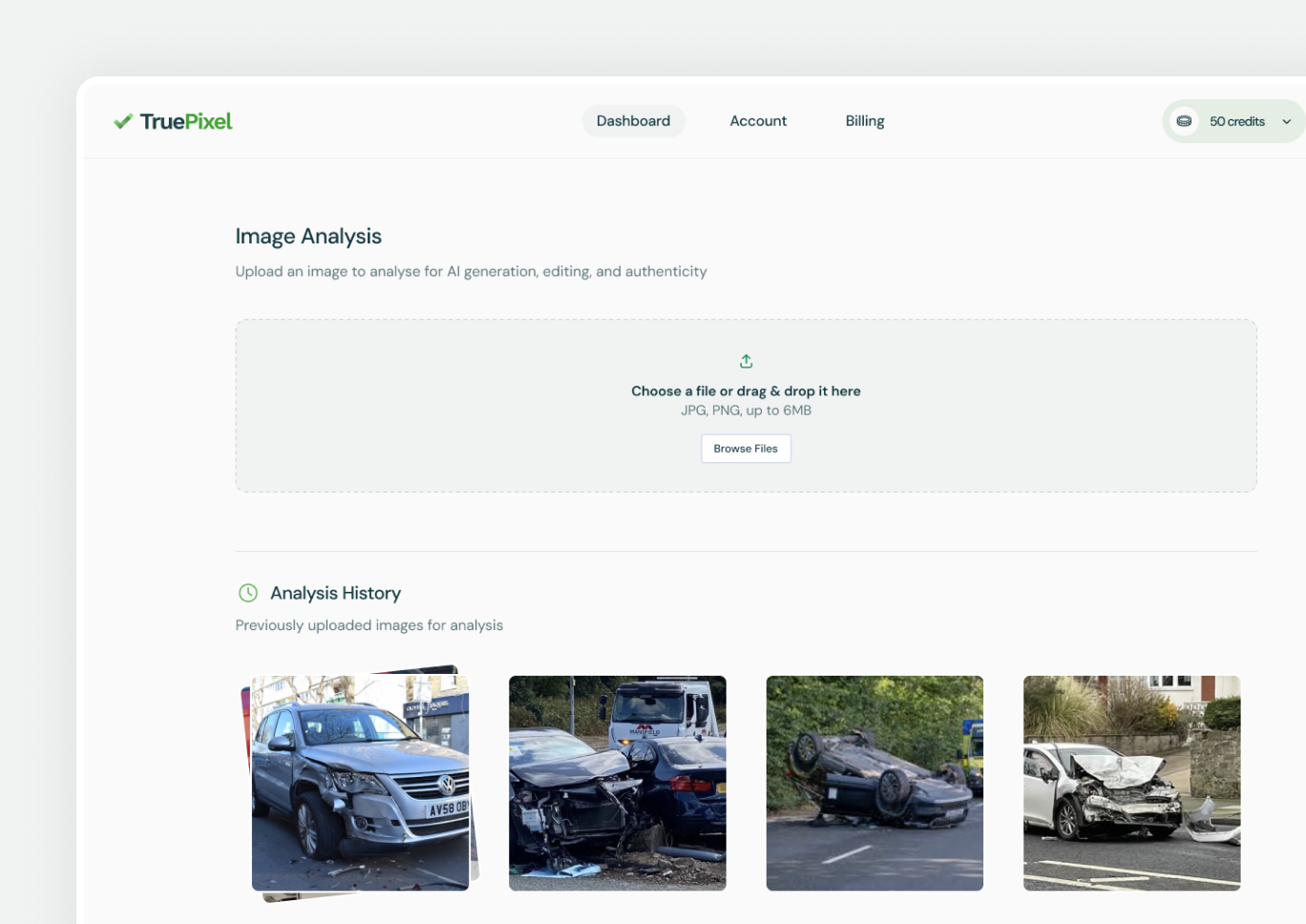

This is what allows Humanly to work across auto, property, health and life insurance. It does not just look for a single form of manipulation, it studies the entire fingerprint of an image.

As soon as a claimant uploads their evidence, Humanly quietly performs a deep assessment in the background. Suspicious content is flagged before it reaches an adjuster, allowing teams to focus on cases that genuinely require expertise rather than sifting through noise.

The Impact: Fraud Down, Trust Up

One of the first major deployments of Humanly was for a leading ECO4 installer. The results were immediate and significant.

Ninety eight per cent of fraudulent image claims were prevented within six months.

This translated into millions of pounds in avoided losses.

Operational workload was transformed.

Instead of investigating every unusual image, adjusters received a small number of genuinely high risk cases.

Suppliers benefited too.

Legitimate installers no longer had to compete with fraudulent submissions, and approval processes became faster and more transparent.

Customers saw improvements in service.

Fewer delays, fewer back and forth requests for clarification, and clearer communication from the insurer or installer.

But the most important result was something wider.

Humanly restored confidence.

It reassured insurers that their digital evidence pipeline was secure.

It reassured suppliers that honest work would always be recognised.

It reassured customers that their claims would be handled fairly.

Humanly demonstrated that when used correctly, AI can protect trust rather than undermine it.

A New Chapter for Anti AI Technologies

The rise of synthetic media will not slow down. As generative models evolve, insurers and cyber security teams will face increasingly complex evidence, from deepfake images to engineered documentation. Humanly represents a new category of defence, where AI detection and digital forensics work together to validate the integrity of the digital world.

As an AI development company, AI XON continues to evolve Humanly’s capabilities. The system constantly learns from new forms of synthetic fraud, updates its detection techniques and strengthens its position as one of the most advanced deepfake detection tools available.

Humanly is not simply a product. It is the beginning of a new era of evidence integrity. An era where insurers and cyber teams can rely on automation that is transparent, explainable and built for the realities of modern digital risk.